AI Literacy: Understanding and Working with AI and its Limits

What does “AI Literacy” really mean?

When data literacy first emerged as a core competency, most people equated it with the ability to code in Python. But data literacy was really about using data to define business questions, interpret results and turn insights into decisions. AI literacy faces the same structural misconception.

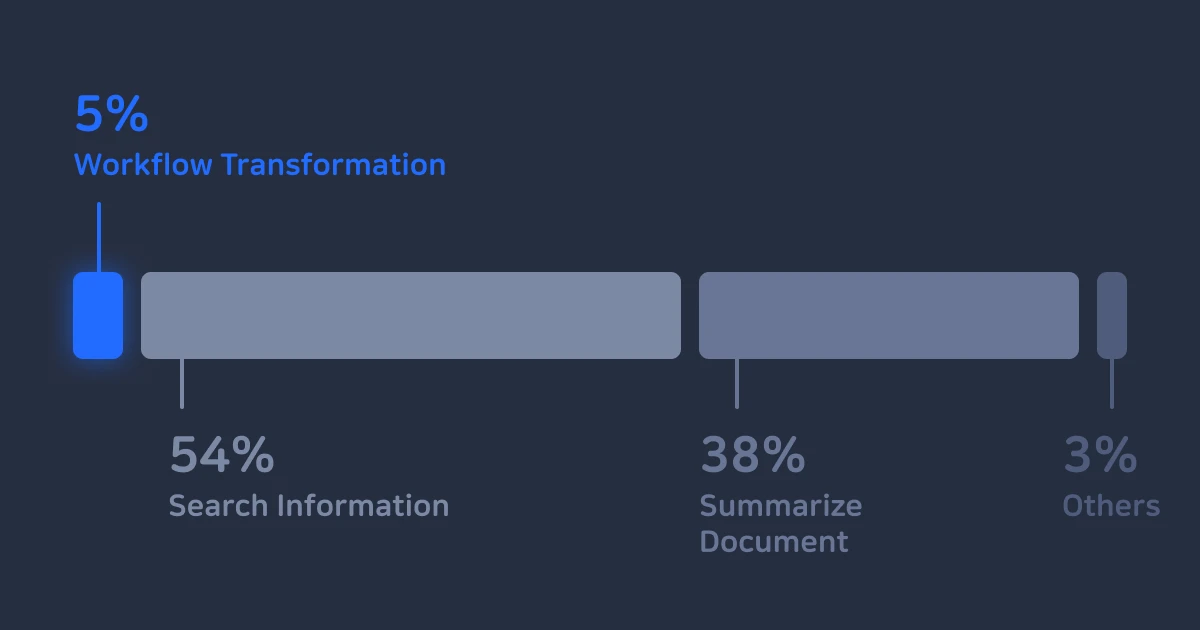

According to EY’s 2025 survey, companies are leaving 40% of AI’s potential productivity gains on the table, and low AI literacy is the culprit. While 88% of employees report using AI at work, most stick to surface-level tasks, such as searching and summarizing. Only 5% are using AI to fundamentally rethink how they work. (EY 2025 Work Reimagined Survey)

AI competency is often reduced to knowing how to write a good prompt or being familiar with the functions of specific tools. But genuine AI literacy means understanding how AI actually works, recognizing where it can go wrong and managing those risks. Think of AI less as a simple tool and more as a capable, but fallible, virtual team member. That means AI literacy is, at its core, the ability to collaborate with AI safely and effectively.

Challenge to AI Literacy: The Gap in Training, Practice and Management

Most companies have already rolled out AI tools like ChatGPT or Claude. But is investment in training programs and technology actually improving competencies? Their effectiveness remains unclear.

According to Microsoft’s 2025 Work Trend Index, AI-forward companies are seeing real productivity gains, while those lagging behind are stagnating. The difference comes from the organizational capability to redesign workflows with AI and manage the risks that come with it.

- Feature-first fragmentary training: Prompt-writing workshops look great on completion reports, but they don’t prepare employees to deal with AI inside complex, real-world business situations.

- Ethics training disconnected from real risks: Abstract discussions about AI ethics miss what employees actually need. Practical answers to questions like “Why does AI sometimes make things up?” or “Is it safe to feed our internal data into AI?” are what matter. Without sufficient risk awareness, security incidents and flawed decisions become more likely.

- One-off use disconnected from outcomes: If employees leave training still using AI only to look things up, the deeper competencies in automating repetitive tasks and streamlining workflows go untapped.

How Telta Defines AI Literacy

Real AI literacy goes beyond simply knowing how to use a tool, and is built on two pillars: the foundational understanding to use AI safely, and the practical use to drive real results. Telta developed the AI Skill Taxonomy, an objective benchmark for measuring AI competencies, drawing from frameworks by OpenAI, Anthropic and Stanford, as well as job data from Fortune-ranked, AX-leading enterprises.

AI Understanding and Risk Awareness

The bedrock competencies for safe, effective AI use.

- Technical Understanding: Knowing how AI models work and where their limitations lie

- Risk Awareness: Identifying and mitigating threats in AI use like security vulnerabilities, data privacy exposure and potential bias

- Tool Understanding and Selection: Selecting the most suitable AI model or tool based on the nature and context of the task

AI Use Competency

The hands-on competencies for solving real problems and creating values.

- Task Planning: Structuring complex goals and constraints into prompts that AI can actually work with

- Execution and Interaction: Driving results through iterative dialogue and feedback to improve quality

- Result Validation: Critically evaluating AI outputs before finalizing deliverables

The Telta AI Literacy Assessment doesn’t test what people know about AI.

It evaluates how they think and act when using it. Many rely on quiz-based assessments, but they measure what people know, not what they can actually do. Telta’s simulation-based assessments mirror real work scenarios to reveal employees’ true proficiency.

1. Track the thinking, not just the result

We doesn’t score right or wrong. It analyzes the full conversation log and behavioral data as participants work through problems in real dialogue with AI, making visible how people actually think: how they reasoned through a task, and how they refined their prompts.

2. Objective analysis based on global framework

Based on the Telta AI Skill Taxonomy defined above, each employee’s competencies are analyzed from multiple perspectives. Rather than a single score, participants receive a detailed 15-page report with specific behavioral feedback, such as “lacks structured prompting” or “skips fact-checking before validating outputs.”

3. Strategic data for organizational AX

For HR teams and executives, Telta delivers an Organizational AX Report* that paints a full picture of AI maturity across the company. Broken down by department and role, it helps prioritize training investments and design tailored curricula.

*Organizational reports are available for assessments of 50 or more employees.

The One Variable That Separates AX Leaders from the Rest, AI Literacy

As AI becomes the new normal, anyone can spin up an AI model with a single click. Yet with the same tools, some organizations barely move beyond using AI as a search engine, while others transform entire workflows and business models. The only variable that explains the gap is AI literacy of those who use it.

The real ROI of AI adoption isn’t determined by how many tools you’ve deployed or how many training hours you’ve logged, but by the competencies of the people behind the keyboard. Organizations with AI literacy, who understand the potential of AI, control its risks and fundamentally rethink the way work gets done, don’t just keep up with change, they lead it.

.svg)